May 11, 2026

By Brian Bastian, Head of Product

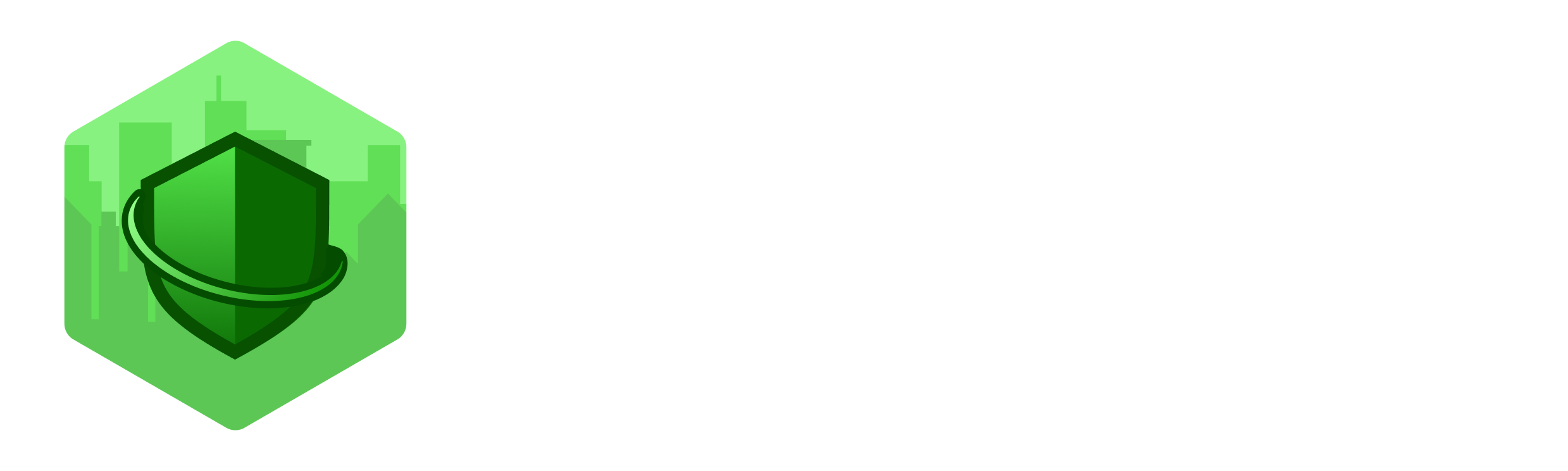

The U.S. Forest Service is closing 57 of its 77 research stations. That’s three-quarters of the agency’s science infrastructure, spread across 31 states, being consolidated into a single operation in Fort Collins, Colorado.

The stated rationale is consolidation and efficiency, framed as “unifying research priorities” by the agency. Many of the scientists affected disagree with that characterization. One researcher quoted in the Bulletin of the Atomic Scientists called it “just madness.”

Whether or not you agree with the decision, the practical consequences for wildfire risk science are worth understanding. This isn’t an abstract policy story. The data these stations produce feeds directly into the models the insurance industry uses to price and manage wildfire exposure.

What These Stations Actually Do

These aren’t administrative offices. The stations scheduled for closure are working research facilities: ecologists, fire behavior scientists, meteorologists, and hydrologists doing regionally embedded work that takes years to build and can’t easily be replicated from a central hub.

Their output includes fire behavior models, smoke dispersion forecasts, fuel load assessments, and historical fire records. That’s the upstream data that flows into catastrophe models, informs evacuation planning, and shapes how incident commanders manage containment. It also underpins a good deal of the academic research that the insurance industry relies on for risk benchmarking.

An Inside Climate News investigation published April 24 raised an additional concern: that a century of historical Forest Service documents, including fire records, ecological data, and land surveys, could be lost entirely as the agency relocates headquarters and shutters regional stations. That kind of longitudinal data is genuinely irreplaceable.

The Specific Risks for Risk Professionals

The Pacific Wildland Fire Sciences Laboratory in Seattle is one of the stations at risk. It’s the primary source for wildfire smoke forecasts across the Pacific Northwest. After a hot, dry winter in the region, losing that forecasting capacity ahead of fire season is a real operational concern, not just for communities but for claims teams, reinsurers, and anyone running exposure models in that geography.

Smoke forecasts, for context, don’t just drive air quality alerts. They inform hospital surge planning, school closures, evacuation routing, and air asset deployment. Degraded forecasts mean degraded downstream decisions across the whole emergency management chain.

The broader fire behavior modeling picture is equally important. The models incident commanders use to predict fire spread, and that cat modelers use to calibrate event scenarios, rely on ongoing research inputs. Reduce that input stream, and the models age out of calibration faster.

A study published in Science Advances this month found that wildfire-favorable weather hours in North America are 36% higher than they were 50 years ago. Fires are also burning longer into the night than historical patterns suggest, driven by drier overnight conditions. The science on wildfire behavior is getting more complex, not less. The research capacity to keep up with that complexity is heading in the wrong direction.

The Data Foundation Problem

Here’s the core issue for the insurance industry: wildfire CAT models are only as good as the underlying data. A significant portion of that data has historically come from federal sources: Forest Service research, USGS fire science, NOAA weather modeling. These aren’t the only inputs, but they’re a foundational layer.

“Wildfire CAT models are only as good as the underlying data.”

When that layer degrades, modeled loss estimates diverge from actual ground conditions over time. That divergence tends to show up in loss ratios before it shows up in model outputs. Carriers and reinsurers who’ve been through prior model inadequacy cycles know how that plays out.

The place-based data problem is the hardest part. Fuel accumulation trends, historical fire spread patterns, and microclimate data are built up over decades of regionally embedded observation. You can’t reconstruct that from Fort Collins. When those research teams disperse, the institutional knowledge goes with them.

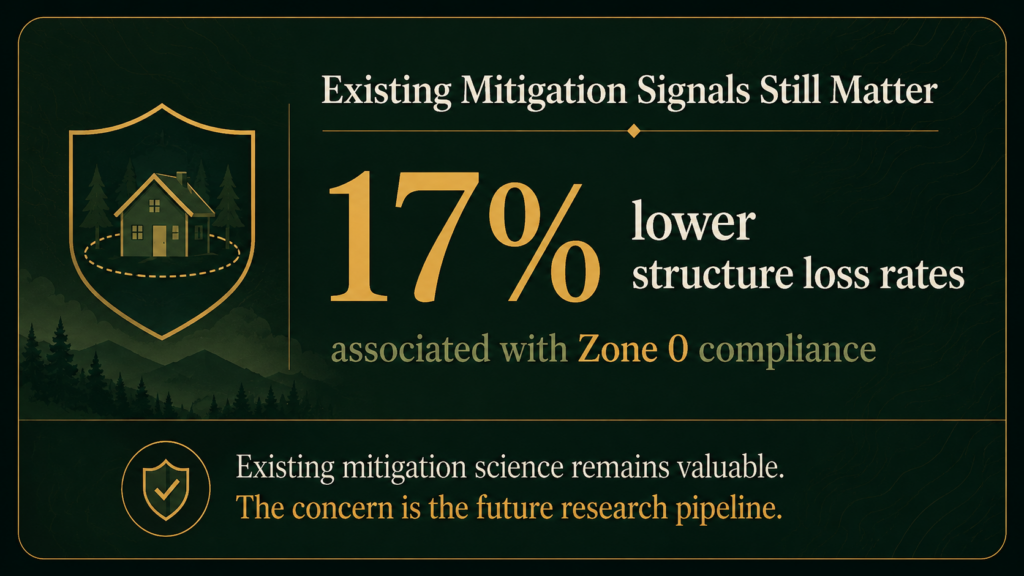

It’s worth noting that the mitigation science we do have is solid and isn’t going anywhere. UC Berkeley research and CAL FIRE inspection data consistently show that properties with proper defensible space and hardened structures have materially better loss outcomes. Zone 0 compliance alone (combustibles cleared within 5 feet of the structure) correlates with 17% lower structure loss rates. Carriers that price those factors accurately are on solid footing. The concern is about the ongoing research pipeline, not the existing body of knowledge.

What Risk Professionals Should Do Now

A few practical considerations for carriers, MGAs, and risk modelers:

- Audit your data dependencies. Understand how much of your cat model calibration relies on Forest Service research inputs. If your modeling vendor can’t answer that question clearly, it’s worth pressing.

- Build redundancy into your forecast monitoring. If Pacific Northwest smoke forecasting capacity degrades this season, your claims and exposure management teams need alternative data sources. That’s a workflow you want to set up before fire season, not during it.

- Weight property-level data more heavily. As regional public data degrades, the underwriting advantage shifts to carriers and MGAs with access to high-resolution, property-level risk assessment. Fuel load, structure construction type, defensible space condition, and local ignition history all become more differentiating, not less.

- Watch IBHS and university fire labs. The Insurance Institute for Business and Home Safety and several university-based fire research programs will continue producing credible, applicable research. Staying connected to those outputs is a reasonable hedge against federal data gaps.

The Bigger Picture

The insurance industry has relied on federal wildfire science as part of its shared data infrastructure for decades. That infrastructure is getting smaller at exactly the moment wildfire risk is getting larger. That’s a gap the market will need to fill, through private data investment, academic partnerships, or state-level programs.

The carriers and MGAs that recognize this shift early and adapt their underwriting and modeling approaches accordingly will have a real advantage. The ones that continue treating federal data as a stable, reliable foundation are taking on risk that isn’t showing up in their models yet.

“The carriers and MGAs that recognize this shift early and adapt their underwriting and modeling approaches accordingly will have a real advantage.”

Property Guardian provides carriers and MGAs with property-level wildfire risk assessment and mitigation intelligence that doesn’t depend on federal data sources. As the public data infrastructure contracts, that kind of independent, ground-truth assessment becomes a more important part of sound wildfire underwriting.

Sources

US Forest Service Closing Research Stations That Study Wildfire Risk — Salt Lake Tribune

Forest Service Plan to Close Research Stations Stokes Fear as Wildfire Season Approaches — Stateline

Forest Service Plan to Close Research Stations — The Spokesman-Review (April 25, 2026)